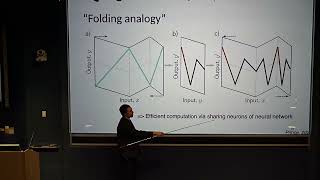

Xavier Bresson: "Convolutional Neural Networks on Graphs"

ฝัง

- เผยแพร่เมื่อ 15 ก.พ. 2018

- New Deep Learning Techniques 2018

"Convolutional Neural Networks on Graphs"

Xavier Bresson, Nanyang Technological University, Singapore

Abstract: Convolutional neural networks have greatly improved state-of-the-art performances in computer vision and speech analysis tasks, due to its high ability to extract multiple levels of representations of data. In this talk, we are interested in generalizing convolutional neural networks from low-dimensional regular grids, where image, video and speech are represented, to high-dimensional irregular domains, such as social networks, telecommunication networks, or words' embedding. We present a formulation of convolutional neural networks on graphs in the context of spectral graph theory, which provides the necessary mathematical background and efficient numerical schemes to design fast localized convolutional filters on graphs. Numerical experiments demonstrate the ability of the system to learn local stationary features on graphs.

Institute for Pure and Applied Mathematics, UCLA

February 7, 2018

For more information: www.ipam.ucla.edu/programs/wor... - วิทยาศาสตร์และเทคโนโลยี

Very interesting talk and introduction to a topic I didn't know about. Thanks ! :)

31:40 for Graph NN

Very recommendable!

Link(s) to the slides:

helper.ipam.ucla.edu/publications/dlt2018/dlt2018_14506.pdf

or

www.dropbox.com/s/0nbeo7jjn2l01us/talk_IPAM_07Feb18.pdf?dl=0

Thank you so much! I was just about to ask for it.

why do we need to extract eigen vectors and values from graph laplacian ? what is the motivation ? how does it help me ?

18:00 for Graph Coarsening

At 12:00 I think the Eigendecomposition is Δ = Φ Λ Φ'

right

Lambda is diagonal so symmetric and the laplacian is symmetric, I believe, so if you transpose you get Laplacian = phi' Lambda phi and he didn't err.

That looks like an emoticon

@@keeperofthelight9681 More specifically, a cat ?