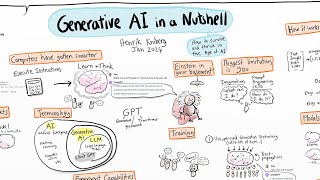

What are Generative AI models?

ฝัง

- เผยแพร่เมื่อ 26 เม.ย. 2024

- Learn more about watsonx → ibm.biz/BdvxDz

Generative AI has stunned the world with its ability to create realistic images, code, and dialogue. Here, IBM expert Kate Soule explains how a popular form of generative AI, large language models, works and what it can do for enterprise.

#LLMs #GenerativeAI #FoundationModels #EnterpriseAI #Watsonx

![เกิดใหม่ทั้งทีก็เป็นสไลม์ไปซะแล้ว ซีซั่น 3 - ตอนที่ 52 [ซับไทย]](http://i.ytimg.com/vi/ZLgYjtGQpbc/mqdefault.jpg)

Kate, this was awesome. It is so refreshing to find presenters who can take complicated material and explain it, in just a few minutes, in a fashion that makes it so reachable.

So far, by far the best video on Generative AI I’ve seen.

Great explanation at a very basic level, very easy to follow the whole video. Thank you very much.

The explanation i`m assuming is great for a technical person which knows already a lot about generative ai models but for the larger public you need to explain it way simpler and not using technical terms. Analogies help a lot.

Over the last week, I have been trying to find out the differences between Generative AI and Foundation Models, but could not find the relevant and exact content and this one video has cleared all of that, too good!

Great presentation. Make such a complex topic seems affordable, means that there a lot work behind! Thanks

The most concise summary / explanation of what is Generative AI 👍💯

These videos are really amazing and deserve waaaay more attention and credit. IBM Technology, you are doing a great Job!

I love that this is on TH-cam for free, thank you very much for a great basic understanding! :)

Outstanding presentation, thank you IBM

Excellent presentation Kate ! Thank you !

Outstanding explanation. Thank you!!! Please continue such great work.

Thank you

Very very... very good video. How to use 9 minutes to understand the concepts of Generative AI, Foundation Models, Large Langage Models, etc. Awesome !

These videos are simply amazing, thank you IBM.

Thank you so much for the introduction to a very important topic.

Great insights into the concepts of Generative AI. Thanks

Very clear and systematic introduction! Thanks

Thanks Kate for this awesome video. Interesting to see the vast use cases of generating AI other than chatbots.

I'm a '70s AI guy. I have done many parsers and translators. I have done old-style theorem proving and structure learning. I keep asking myself, in this multi-layer perceptron formulation, how is the parse tree represented? How are logic statements represented? How is knowledge manipulated? All I seem to get is generalities about training and "the next word". Where should I be looking?

Isn’t generative ai another fancy name for “sampling” and learning the “ distribution” that generates it..... Facebook has already done it and zuck got called in for hearing doing this....now they are selling it with a new label with black box function approximation power of neural networks

Kate, this is really highly informative and one of the best videos I came across for gen ai.

She just example the whole AI bubble , so awesomely, Kate great job, the best video I have watched so far on the internet. 👏👏👏

Fantastic presentation and a great way to promote IBM offerings.

Very very clear presentation! thanks!

Awesome Introduction!

Looks like I'm hooked on this topic.

then you're fish since you got hooked

Your writing in reverse is surprisingly skillful; great coverage of models. Thank you

It's reversed dear

@@wungus-bongo how does it work then?

@@Milad_digital look it up, it involves mirrors and other screens

@@titoadesanya9369 thanks I was not able to concentrate in the topic because it kept distracting me LOL

@@vriveradsame problem with me.. how they create these videos.. ?

Excellent presentation. My only gripe is the masses will still think generative AI is simply predicting the next word, one of Jeffery Hinton's concerns. There's a lot more to it than that. When you ask a question, it needs to identify related material in the dataset and then construct specific parameters of the neural net in a way that addresses the structure and meaning of your input that makes sense. Kind of like what we do when we piece together sentences based on our experience. That requires great intelligence. This is why GenAI can already outperform humans in many academic and operational benchmarks, and it's beating us humans in more and more of these by the month. Once you go fully multimodal in these endeavours, we'll very quickly reach AGI.

Didn’t she mention this during 3:20?

Very nice insights into the topic. Well done Kate

Thanks Kate to simplifying the AI model explanation to general layman, interesting time to be in to see how AI is evolving like what science fiction movies have predicted all these year to become a reality.

Have you actually watched those movies.

@@lamboseeker238 There are less dramatic movies like 'Her' which paint a more realistic use case of Ai rather than the Terminator. I suggest you watch that which is relevant to the current Ai Assistant market. The "Machines Take Over the Universe" plot is a dystopic fantasy not rooted in reality.

Thanks IBM Research Team, this videos are amazing as a learning resource

Excellent explanation, top of top

Great explanation. Thank you.

As an IBM Employee this video makes me proud ❤

When I was a kid in the 60s our local TV weatherman (Ralph Ramos) actually wrote like that for real. He stood behind a clear panel with a map outline and used a grease pencil to write temperatures on it backwards.

you know she is not writing backwards right?, that is the whole idea of writing on a piece of glass and invert the image.

It is called lightboard or learning glass, btw you can ask gpt about it...

Very precise and accurate video explains things clearly whats gonna up in future ! - thanks Mam.

Thanks. Easy to follow for non-tech. Great!

Very well explained

Great content explained with simplicity!

I loved it! Thanks for this amazing video :)

good work and fluid presentation, many thanks

Amazing explaination!!

Interesting. Generative A.I: "predict the last word of the sentence based off the words it saw before". My very first A.I. program in college (ages ago) was a game "guess what I'm thinking". For each wrong guess the program was given a clue, thus building its knowledge-base. Prompt: What are you thinking of? (input: animal) (program: shark) (no. hint: mammal) (program: dog)(no. hint: has a trunk)...(no. hint: large ears)....(no. hint: grey) (program needs the answer: elephant). The program now has the definition of an elephant.

Without knowing much about Generative A.I. it seems similar except "on steroids", lol "on the internet of data". Will have to follow the links above to learn more.

Kate Soule, great explanation. Thanks.

Are the 2 latest foundation models you mentioned, molformer and Earth Science for climat change, available as demos?

Good Job Kate. Also, good use of the whiteboard and colour annotations.

It helped that you also used simple language, didn't over-crowd the whiteboard and effectively used spacing between the concepts, as well as with the groupings for the workflow components ie: (FM /Prompting on the right of the board) , from the (LLMs)concepts on the left hand-side of the board.

Thanks for sharing your gift of teaching. your contribution is appreciated.

If you have a course or workshop that you teach on GenAI, I would be interested in learning more. (hint, hint)

Cheers

Excellent explanation and breakdown by Kate, brilliant woman !

Was just amazing, thanks.

Excellent explanation.

Thank you that was very helpful

Great explanation about the Generative AI Models

I think your last proposition will make a great impact to the human kind with the help of Generative AI

Like your podcasts(?) guys! Awesome!

Very Well Explained !

This video offers concise and informative insights into the AI journey, perfect for those who are new to the topic and seeking a clear understanding

using this to study for university, thank you!

Really well explained

Kate, great video!

Good Explanation Kate.

Is there an assembly LLM kit sold in Amazon that I could assemble and understand?

Truly nice way of explaining.

.

I just love this videos

I know someone else who wrote inverted..he also painted well. impressive

very well explanation for beginner like me

Excellent. Thanks

Informative video. Thanks

Great Video. Very Clear. Thanks.

Outstanding explanation, I'm even more impressed for your hability to invert your writing effortlessly... mindblowing!

congratulations!

I was thinking the same. Then considered that if she were to write on the glass board 'normally' and then flip the video horizontally, it'd appear as if she was writing in reverse and flipped at the same time. Not as impressive as a skill, tho...

Exactly!! I kept getting distracted by that!!

Great video!!! IBM used to be the undisputed leader of computer technology. Would be great to see Big Blue back in the game and become a leader again!!!! (Apple's market cap 2 trillion dollars, Nvidia's market cap 1 trillion dollars, IBM (the former world leader of computer technology) 120 billion dollars). Hope to see a publicly-available LLM from IBM soon!!!!

This "blackboard" is so good :)

As impressive as AI is, Kate writing inverted is just as impressive.

I got distracted when she wrote LLM and for the whole 8+ mins my full attention was how is she doing that.

@@vinallu same here, I was wondering whether a different technique was used for the whole production. I am still curious - whether she is writing inverted or some interesting technology here ?

@@shutterbug-sr its called lightboard, learning glass, etc. - It's current state of the art presentation technology.

@@goodtech_rules . Thanks! "A lightboard allows a presenter to write and draw while maintaining eye contact to deliver their message in a natural and engaging way. Video is filmed through the glass and mirrored so the orientation appears correct to the viewer"

I was thinking the same thing!!

I think I can make a slight correction here. Let me know if I'm wrong. The idea of "generative" in Generative AI isn't the ability to "generate" the next word, but in that the model is able to generate new observations (or data points) based on the distributions in the data. As she describes, LLMs are part of the idea of foundation models, and LLMs are the NLP derivative of FMs that are able to sample from those distribution of words (or tokens).

Thanks… I was bit confused at 1st view of the video and then just saw your comment and it clicked me that missing part… No doubt she has explained the very complex concept in the most easy to understand manner…

In an NLP setting, predicting next token is actually generating a new observation.

is just like she said according to what i have read. but would like an expert to give input.

It's the same thing. You're just talking about a different shade of grey, there's like at least 50 shades.

yup!

amazing and great presentation

Thank you, for the nice presentation

Thank you so much for such an amazing video! It is informative, helpful and engaging. 😀

thanks kate this is useful to me.

Awesome. Thank you

Great explanation of course! I'm also equally impressive with her (mirror ?) writing skill.

See ibm.biz/write-backwards for more details

Yes, really informative. Thank you.

Quality vid IBM.

Because the video is flipped horizontally, the text she writes may appear readable. This is a clever solution. Additionally, wearing black or dark clothes could further improve the text's readability.

Can we take a second just to appreciate the skill necessary to write proper readable handwritten text in reverse?

I would assume the video is inverted after being filmed? 🤷♀️

Great explanation.

Thank you

Its highly impresive

69th comment! As a researcher, it feels nice to see IBM will be researching with me this great new innovations! Best of luck IBM, you are going to need it!

Thank you.

I’m more impressed that they mirrored the video so that her handwriting was flipped around for us.

See ibm.biz/write-backwards for more

Great info Kate. What kind of White Board are you writing on? It's so cool!

Thank You

Good explanation

nice video!!

I think I have a different viewpoint on the fact that Foundational model are a part of Generative AI ( 2:35), foundational models can drive predictive AI as well as Generative AI depending on the type of Neural Nets we use, as the name suggests it acts as a foundation for both alongwith customisation & automation.

@1:55 Isn't the training supervised rather than unsupervised? It is predicting the next word that follows the given text: the next word is known.

Very informative; thank you!

very nice presentation thank you

as for the Trust issue, is there some kind of "bill of meterials" for what has been processed which could be reviewed? i think this would be crucial for transparency

I believe you are looking for what is called a Facts Sheet which is part of AI Governance

Great work

Book Recommendation: "A Primer to the 42 Most commonly used Machine Learning Algorithms (With Code Samples)."

Thanks

thanks to ch*tgpt i've finally been able to build my own RNN in c/c++ and dozens of other things. simple series prediction (audio spectral extension) took a few hours to train a FFNN to satisfactory results, but training a RNN on 96 input "hot one" text chars has taken over a month to train on my little asus L210 and i'm maybe a third of the way based on loss.

there's one thing i've observed in decades of procedural media programming, procedure tends to express outside of human discretion and can expand or distend our experience and concept of expression. plus, per burroughs' cut-up method, you do tend to get a bit of EVP and transduction in procedure. aheheh.

but yeah i was looking forward to swiftly crosstraining my text model once trained :P

initial input an analysis of childs' fairy tales and an eloquent and wordy sufferagist were the best open source i could find. relly tho you know i oughta just put out a few words eh.

Nice .. are you writing over mirrors or this is some digital tool

Thank you! I also commend your ability to write backwards so legibly

Awesome