Deploy Transformer Models in the Browser with

ฝัง

- เผยแพร่เมื่อ 16 ก.ย. 2024

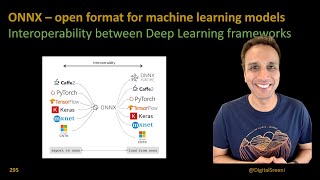

- In this video we will demo how to use #ONNXRuntime web with a distilled BERT model to inference on device in the browser with #JavaScript. This demo is based on the amazing work of our community member Jo Kristian Bergum!

Connect with / jobergum

Source Link: github.com/job...

Blog: / moving-ml-inference-fr...

Model: huggingface.co...

Dataset: huggingface.co...

#machinelearning #transformers #pytorch #onnx #onnxruntime #JavaScript #web

This instructor is actually an angel, thank you Madam for the straightforward tutorial!

Really helpful thanks.

very useful and clear

Thank you!!

hi thanks for this video

You're welcome!! 😊

Nice. How to convert it to onnx using cuda?

there is a bug-

InvalidArgument: [ONNXRuntimeError] : 2 : INVALID_ARGUMENT : Unexpected input data type. Actual: (tensor(int32)) , expected: (tensor(int64))

Hi, your comment is appreciated, and we want to follow up on this. Could you file an issue in ORT repo with details so we can take a look? github.com/microsoft/onnxruntime

This is not "in the browser". This is still node.js. That's server technology.